Vossian Antonomasia

Automatic extraction of Vossian antonomasia from large newspaper corpora.

(Shout-out to Gerardus Vossius, 1577–1649.)

Some More Statistics

An “executable” version of this file is statistics.org.

Temporal Distribution

Let us check how Vossian Antonomasia (VA) is spread across the whole corpus:

echo "year articles cand wd wd+bl found true prec"

for year in $(seq 1987 2007); do

echo $year \

$(grep ^$year ../articles.tsv | cut -d' ' -f2) \

$(zcat ../theof_${year}.tsv.gz | wc -l) \

$(cat ../theof_${year}_wd.tsv | wc -l) \

$(cat ../theof_${year}_wda_bl.tsv | wc -l) \

$(../org.py -y ../README.org | grep ${year} | wc -l) \

$(../org.py --ignore-source-ids fictional_humans_in_our_data_set.tsv -y -c -b ../README.org | grep ${year} | awk -F'\t' '{if ($2 == "D" || $3 == "True") print;}' | wc -l)

done

| year | articles | cand | wd | wd+bl | found | true | prec | ppm |

|---|---|---|---|---|---|---|---|---|

| 1987 | 106104 | 641432 | 5236 | 131 | 129 | 95 | 73.6 | 0.90 |

| 1988 | 104541 | 637132 | 5074 | 143 | 141 | 88 | 62.4 | 0.84 |

| 1989 | 102818 | 625894 | 4922 | 151 | 148 | 104 | 70.3 | 1.01 |

| 1990 | 98812 | 614164 | 4890 | 142 | 140 | 105 | 75.0 | 1.06 |

| 1991 | 85135 | 512582 | 4189 | 154 | 154 | 103 | 66.9 | 1.21 |

| 1992 | 82685 | 493808 | 4442 | 152 | 152 | 103 | 67.8 | 1.25 |

| 1993 | 79200 | 480883 | 4338 | 167 | 167 | 121 | 72.5 | 1.53 |

| 1994 | 74925 | 464278 | 4038 | 164 | 164 | 112 | 68.3 | 1.49 |

| 1995 | 85392 | 500404 | 4636 | 162 | 162 | 124 | 76.5 | 1.45 |

| 1996 | 79077 | 497688 | 4250 | 186 | 186 | 133 | 71.5 | 1.68 |

| 1997 | 85396 | 515759 | 4561 | 173 | 173 | 134 | 77.5 | 1.57 |

| 1998 | 89163 | 571010 | 5333 | 243 | 243 | 180 | 74.1 | 2.02 |

| 1999 | 91074 | 585464 | 5375 | 189 | 189 | 136 | 72.0 | 1.49 |

| 2000 | 94258 | 602240 | 4750 | 231 | 231 | 172 | 74.5 | 1.82 |

| 2001 | 96282 | 587644 | 4512 | 210 | 209 | 163 | 78.0 | 1.69 |

| 2002 | 97258 | 597289 | 4992 | 231 | 229 | 177 | 77.3 | 1.82 |

| 2003 | 94235 | 590890 | 4749 | 219 | 216 | 165 | 76.4 | 1.75 |

| 2004 | 91362 | 571894 | 4702 | 192 | 191 | 153 | 80.1 | 1.67 |

| 2005 | 90004 | 562027 | 4680 | 208 | 207 | 162 | 78.3 | 1.80 |

| 2006 | 87052 | 561203 | 4786 | 221 | 221 | 169 | 76.5 | 1.94 |

| 2007 | 39953 | 260778 | 2276 | 101 | 101 | 76 | 75.2 | 1.90 |

| sum | 1854726 | 11474463 | 96731 | 3770 | 3753 | 2775 | 73.9 | 1.50 |

| mean | 88320 | 546403 | 4606 | 180 | 179 | 132 | 73.7 | 1.49 |

The table shows the temporal distribution of the number of candidate phrases (cand) after matching against Wikidata (wd) and a blacklist (wd+bl), and after the manual inspection (true).

Let us plot some of the columns:

reset

set datafile separator "\t"

set xlabel "year"

set ylabel "frequency"

set grid linetype 1 linecolor 0

set yrange [0:*]

set y2range [0:100]

set y2label 'precision'

set y2tics

set key bottom right

set style fill solid 1

set term svg enhanced size 800,600 dynamic fname "Noto Sans, Helvetica Neue, Helvetica, Arial, sans-serif" fsize 16

#set out "nyt_vossantos_over_time.svg"

plot data using 1:6 with linespoints pt 6 title 'candidates',\

data using 1:7 with linespoints pt 7 title 'VA',\

data using 1:8 with lines title 'precision' axes x1y2

# for arxiv paper

set term pdf enhanced lw 2

set out "nyt_vossantos_over_time.pdf"

replot

# for DSH paper

set term png enhanced size 2835,2126 font "Arial,40" lw 4

# set term png enhanced size 800,600 font "Arial,16" lw 2

set out "nyt_vossantos_over_time.png"

plot data using 1:6 with linespoints pt 6 ps 7 lc "black" title 'candidates',\

data using 1:7 with linespoints pt 7 ps 7 lc "black" title 'VA',\

data using 1:8 with lines lc "black" title 'precision' axes x1y2

# ---- relative values

set term svg enhanced size 800,600 dynamic fname "Noto Sans, Helvetica Neue, Helvetica, Arial, sans-serif" fsize 16

set out "nyt_vossantos_over_time_rel.svg"

set ylabel "frequency (per mille)"

set format y "%2.1f"

plot data using 1:($6/$2*1000) with linespoints pt 6 title 'candidates',\

data using 1:($7/$2*1000) with linespoints pt 7 title 'VA',\

data using 1:8 with lines title 'precision' axes x1y2

# for arxiv paper

set term pdf enhanced lw 2

set out "nyt_vossantos_over_time_rel.pdf"

replot

set term png enhanced size 2835,2126 font "Arial,40" lw 4

# set term png enhanced size 800,600 font "Arial,16" lw 2

set out "nyt_vossantos_over_time_rel.png"

plot data using 1:($6/$2*1000) with linespoints pt 6 ps 7 lc "black" title 'candidates',\

data using 1:($7/$2*1000) with linespoints pt 7 ps 7 lc "black" title 'VA',\

data using 1:8 with lines lc "black" title 'precision' axes x1y2

Absolute frequency:

Relative frequency:

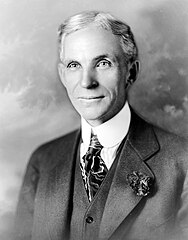

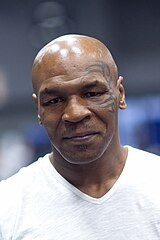

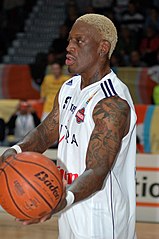

Top-40 VA Sources

Let us count the most frequent sources for Vossian Antonomasia:

../org.py --ignore-source-ids fictional_humans_in_our_data_set.tsv -w -T ../README.org | sort | uniq -c | sort -nr | head -n40

| count | source |

|---|---|

| 68 | Michael Jordan |

| 58 | Rodney Dangerfield |

| 36 | Babe Ruth |

| 32 | Elvis Presley |

| 31 | Johnny Appleseed |

| 23 | Bill Gates |

| 21 | Pablo Picasso |

| 21 | Michelangelo |

| 21 | Donald Trump |

| 21 | Jackie Robinson |

| 21 | Madonna |

| 20 | P. T. Barnum |

| 20 | Tiger Woods |

| 18 | Martha Stewart |

| 16 | Henry Ford |

| 16 | William Shakespeare |

| 16 | Wolfgang Amadeus Mozart |

| 15 | Adolf Hitler |

| 14 | Greta Garbo |

| 14 | John Wayne |

| 14 | Mother Teresa |

| 13 | Napoleon |

| 13 | Ralph Nader |

| 12 | Leonardo da Vinci |

| 12 | Cal Ripken |

| 12 | Leo Tolstoy |

| 12 | Oprah Winfrey |

| 12 | Rosa Parks |

| 12 | Susan Lucci |

| 11 | Walt Disney |

| 11 | Willie Horton |

| 11 | Rembrandt |

| 10 | Albert Einstein |

| 10 | Thomas Edison |

| 10 | Mike Tyson |

| 10 | Julia Child |

| 9 | Ross Perot |

| 9 | Dennis Rodman |

| 8 | James Dean |

| 8 | Mikhail Gorbachev |

Top-40 Gallery

… pulled from Wikidata via Property:P18 (one entity has no image provided in Wikidata):

Categories

online

Extract the categories of articles:

export PYTHONIOENCODING=utf-8

for year in $(seq 1987 2007); do

./nyt.py --category ../nyt_corpus_${year}.tar.gz \

| sed -e "s/^nyt_corpus_//" -e "s/\.har\//\//" -e "s/\.xml\t/\t/" \

| sort >> nyt_categories.tsv

done

Compute frequency distribution over all articles:

cut -d$'\t' -f2 nyt_categories.tsv | sort -S1G | uniq -c \

| sed -e "s/^ *//" -e "s/ /\t/" | awk -F'\t' '{print $2"\t"$1}' \

> nyt_categories_distrib.tsv

Check the number of and the top categories:

echo articles $(wc -l < nyt_categories.tsv)

echo categories $(wc -l < nyt_categories_distrib.tsv)

echo ""

sort -nrk2 nyt_categories_distrib.tsv | head

| articles | 1854726 |

|---|---|

| categories | 1580 |

| Business | 291982 |

| Sports | 160888 |

| Opinion | 134428 |

| U.S. | 89389 |

| Arts | 88460 |

| World | 79786 |

| Style | 65071 |

| Obituaries | 19430 |

| Magazine | 11464 |

| Travel | 10440 |

Collect the categories of the articles:

echo "VA" $(../org.py --ignore-source-ids fictional_humans_in_our_data_set.tsv -T ../README.org | wc -l) articles $(wc -l < ../nyt_categories.tsv)

../org.py --ignore-source-ids fictional_humans_in_our_data_set.tsv -T -f ../README.org | join ../nyt_categories.tsv - | sed "s/ /\t/" | awk -F'\t' '{print $2}' \

| sort | uniq -c \

| sed -e "s/^ *//" -e "s/ /\t/" | awk -F'\t' '{print $2"\t"$1}' \

| join -t$'\t' -o1.2,1.1,2.2 - ../nyt_categories_distrib.tsv \

| sort -nr | head -n20

| VA | 2646 | category | articles | 1854726 |

|---|---|---|---|---|

| 336 | 12.7% | Sports | 160888 | 8.7% |

| 334 | 12.6% | Arts | 88460 | 4.8% |

| 290 | 11.0% | New York and Region | 221897 | 12.0% |

| 237 | 9.0% | Arts; Books | 35475 | 1.9% |

| 158 | 6.0% | Movies; Arts | 27759 | 1.5% |

| 109 | 4.1% | Business | 291982 | 15.7% |

| 102 | 3.9% | Opinion | 134428 | 7.2% |

| 96 | 3.6% | U.S. | 89389 | 4.8% |

| 95 | 3.6% | Magazine | 11464 | 0.6% |

| 62 | 2.3% | Style | 65071 | 3.5% |

| 61 | 2.3% | Arts; Theater | 13283 | 0.7% |

| 46 | 1.7% | World | 79786 | 4.3% |

| 39 | 1.5% | Home and Garden; Style | 13978 | 0.8% |

| 32 | 1.2% | Travel | 10440 | 0.6% |

| 31 | 1.2% | Technology; Business | 23283 | 1.3% |

| 27 | 1.0% | 42157 | 2.3% | |

| 25 | 0.9% | Week in Review | 17107 | 0.9% |

| 25 | 0.9% | Home and Garden | 5546 | 0.3% |

| 17 | 0.6% | World; Washington | 24817 | 1.3% |

| 17 | 0.6% | Style; Magazine | 1519 | 0.1% |

desks

Extract the desks of the articles:

export PYTHONIOENCODING=utf-8

for year in $(seq 1987 2007); do

./nyt.py --desk ../nyt_corpus_${year}.tar.gz \

| sed -e "s/^nyt_corpus_//" -e "s/\.har\//\//" -e "s/\.xml\t/\t/" \

| sort >> nyt_desks.tsv

done

Compute frequency distribution over all articles:

cut -d$'\t' -f2 nyt_desks.tsv | sort -S1G | uniq -c \

| sed -e "s/^ *//" -e "s/ /\t/" | awk -F'\t' '{print $2"\t"$1}' \

> nyt_desks_distrib.tsv

Check the number of and the top categories:

echo articles $(wc -l < nyt_desks.tsv)

echo categories $(wc -l < nyt_desks_distrib.tsv)

echo ""

sort -t$'\t' -nrk2 nyt_desks_distrib.tsv | head

| articles | 1854727 |

|---|---|

| categories | 398 |

| Metropolitan Desk | 237896 |

| Financial Desk | 206958 |

| Sports Desk | 174823 |

| National Desk | 143489 |

| Editorial Desk | 131762 |

| Foreign Desk | 129732 |

| Classified | 129660 |

| Business/Financial Desk | 112951 |

| Society Desk | 44032 |

| Cultural Desk | 40342 |

Collect the desks of the articles:

echo "VA" $(./org.py -T README.org | wc -l) articles $(wc -l < nyt_desks.tsv)

./org.py -T -f README.org | join nyt_desks.tsv - | sed "s/ /\t/" | awk -F'\t' '{print $2}' \

| sort | uniq -c \

| sed -e "s/^ *//" -e "s/ /\t/" | awk -F'\t' '{print $2"\t"$1}' \

| join -t$'\t' -o1.2,1.1,2.2 - nyt_desks_distrib.tsv \

| sort -nr | head -n20

| VA | 2764 | desk | articles | 1854727 |

|---|---|---|---|---|

| 133 | 4.8% | Sports Desk | 174823 | 9.4% |

| 77 | 2.8% | Cultural Desk | 40342 | 2.2% |

| 68 | 2.5% | Book Review Desk | 32737 | 1.8% |

| 61 | 2.2% | National Desk | 143489 | 7.7% |

| 54 | 2.0% | Financial Desk | 206958 | 11.2% |

| 51 | 1.8% | Metropolitan Desk | 237896 | 12.8% |

| 46 | 1.7% | Weekend Desk | 18814 | 1.0% |

| 38 | 1.4% | Arts & Leisure Desk | 6742 | 0.4% |

| 35 | 1.3% | Editorial Desk | 131762 | 7.1% |

| 31 | 1.1% | Foreign Desk | 129732 | 7.0% |

| 31 | 1.1% | Arts and Leisure Desk | 27765 | 1.5% |

| 25 | 0.9% | Magazine Desk | 25433 | 1.4% |

| 25 | 0.9% | Long Island Weekly Desk | 20453 | 1.1% |

| 22 | 0.8% | Living Desk | 6843 | 0.4% |

| 19 | 0.7% | Home Desk | 8391 | 0.5% |

| 15 | 0.5% | Week in Review Desk | 21897 | 1.2% |

| 14 | 0.5% | Style Desk | 21569 | 1.2% |

| 13 | 0.5% | Styles of The Times | 2794 | 0.2% |

| 12 | 0.4% | 6288 | 0.3% | |

| 9 | 0.3% | Travel Desk | 23277 | 1.3% |

Sidenote: There are many errors in the specification of desks.

Authors

Extract the authors of articles:

export PYTHONIOENCODING=utf-8

for year in $(seq 1987 2007); do

./nyt.py --author ../nyt_corpus_${year}.tar.gz \

| sed -e "s/^nyt_corpus_//" -e "s/\.har\//\//" -e "s/\.xml\t/\t/" \

| sort >> nyt_authors.tsv

done

Compute frequency distribution over all articles:

cut -d$'\t' -f2 nyt_authors.tsv | sort -S1G | uniq -c \

| sed -e "s/^ *//" -e "s/ /\t/" | awk -F'\t' '{print $2"\t"$1}' \

> nyt_authors_distrib.tsv

Check the number of and the top authors:

echo articles $(wc -l < nyt_authors.tsv)

echo categories $(wc -l < nyt_authors_distrib.tsv)

echo ""

sort -t$'\t' -nrk2 nyt_authors_distrib.tsv | head

| articles | 1854726 |

|---|---|

| categories | 30691 |

| 961052 | |

| Elliott, Stuart | 6296 |

| Holden, Stephen | 5098 |

| Chass, Murray | 4544 |

| Pareles, Jon | 4090 |

| Brozan, Nadine | 3741 |

| Fabricant, Florence | 3659 |

| Kozinn, Allan | 3654 |

| Curry, Jack | 3654 |

| Truscott, Alan | 3646 |

requires clean-up!

Collect the authors of the articles:

echo "VA" $(../org.py --ignore-source-ids fictional_humans_in_our_data_set.tsv -T ../README.org | wc -l) articles $(wc -l < ../nyt_authors.tsv)

../org.py --ignore-source-ids fictional_humans_in_our_data_set.tsv -T -f ../README.org | join ../nyt_authors.tsv - | sed "s/ /\t/" | awk -F'\t' '{print $2}' \

| sort | uniq -c \

| sed -e "s/^ *//" -e "s/ /\t/" | awk -F'\t' '{print $2"\t"$1}' \

| join -t$'\t' -o1.2,1.1,2.2 - ../nyt_authors_distrib.tsv \

| sort -nr | head -n20

| VA | 2646 | author | articles | 1854726 |

|---|---|---|---|---|

| 411 | 15.5% | 961052 | 51.8% | |

| 30 | 1.1% | Holden, Stephen | 5098 | 0.3% |

| 29 | 1.1% | Maslin, Janet | 2874 | 0.2% |

| 26 | 1.0% | Vecsey, George | 2739 | 0.1% |

| 23 | 0.9% | Sandomir, Richard | 3140 | 0.2% |

| 22 | 0.8% | Ketcham, Diane | 717 | 0.0% |

| 20 | 0.8% | Kisselgoff, Anna | 2661 | 0.1% |

| 19 | 0.7% | Dowd, Maureen | 1647 | 0.1% |

| 19 | 0.7% | Berkow, Ira | 1704 | 0.1% |

| 18 | 0.7% | Kimmelman, Michael | 1515 | 0.1% |

| 17 | 0.6% | Brown, Patricia Leigh | 568 | 0.0% |

| 16 | 0.6% | Pareles, Jon | 4090 | 0.2% |

| 16 | 0.6% | Chass, Murray | 4544 | 0.2% |

| 15 | 0.6% | Smith, Roberta | 2497 | 0.1% |

| 15 | 0.6% | Lipsyte, Robert | 817 | 0.0% |

| 15 | 0.6% | Grimes, William | 1368 | 0.1% |

| 15 | 0.6% | Barron, James | 2188 | 0.1% |

| 15 | 0.6% | Anderson, Dave | 2735 | 0.1% |

| 14 | 0.5% | Stanley, Alessandra | 1437 | 0.1% |

| 14 | 0.5% | Haberman, Clyde | 2492 | 0.1% |

List of All VA Coined by the Two Top-Scoring Authors

Stephen Holden

# extract list of articles

for article in $(../org.py --ignore-source-ids fictional_humans_in_our_data_set.tsv -T -f ../README.org \

| join ../nyt_authors.tsv - | grep "Holden, Stephen" | cut -d' ' -f1 ); do

grep "$article" ../README.org

done

- Scott Joplin (1987/01/20/0005135) High points of the show included the obscure Cole Porter bonbons, ‘‘Two Little Babes In the Wood’’ and ‘‘Nobody’s Chasing Me,’’ Eubie Blake and Noble Sissle’s ‘‘I’m Just Wild About Harry’’ (performed both as a waltz and as a one-step to show how a simple time change can alter a song’s character), and piano compositions by Ernesto Nazareth, ‘‘the Scott Joplin of Brazil,’’ that blended ragtime and tango.

- Irving Berlin (1987/02/08/0011525) Noel Gay was not, as some have claimed, the Irving Berlin of England.

- Joe DiMaggio (1987/05/16/0040728) the Joe DiMaggio of love,’’ he fantasizes while flexing a bicep that refuses to bulge

- George Jessel (1987/05/27/0044042) Compared to the younger smoothies, Mr. Altman, who called himself ‘‘the George Jessel of intellectuals,’’ addressed the audience from the standpoint of an embattled, aging hipster commenting amusingly on everything from the relationship between food and language to condom advertising.

- Evel Knievel (1988/02/05/0116272) ‘‘Lear,’’ directed by Lee Breuer and featuring Ruth Maleczech as the aged king and Greg Mehrten as a drag-queen Fool, has created some excited word of mouth since early work-in-progress performances began at the George Street Playhouse in New Brunswick, N.J. Other high points of the marathon are likely to be Karen Finley performing an excerpt from her scabrously obscene monologue ‘‘The Constant State of Desire,’’ the Alien Comic (Tom Murrin) dressed as an electrified lemon tree, and an appearance by David Leslie, the Evel Knievel of performance artists.

- Jimi Hendrix (1988/05/11/0144027) Yomo Toro, who has been called ‘‘the Jimi Hendrix of the cuatro,’’ will appear at Sounds of Brazil (204 Varick Street) tomorrow for two shows.

- Ed Sullivan (1988/05/12/0144329) Mike, an invented character who is the comic alter ego of the performance artist Michael Smith, is busy becoming the Ed Sullivan of the downtown performance world.

- Clint Eastwood (1989/01/16/0214485) Mr. O’Keefe, a playwright and actor whose surreal family drama ‘‘All Night Long’’ was produced in 1984 in New York at Second Stage, might be described as the Clint Eastwood of performance artists.

- James Dean (1989/03/17/0232294) ‘‘Let’s Get Lost,’’ the second feature by the successful fashion photographer Bruce Weber, focuses on the life and times of Chet Baker, the jazz trumpeter and heroin addict who has been called the James Dean of jazz.

- James Dean (1989/04/02/0236730) Handsome and talented but imperiously self-destructive, the man who has been called ‘‘the James Dean of jazz’’ was a connoisseur of fast cars, women and drugs.

- Bob Marley (1989/11/22/0303163) One of the anthology’s strongest cuts, ‘‘Ayiti Pa Fore’’ (‘‘Haiti Is Not a Forest’) was recorded in 1988 and features Manno Charlemagne, a singer and songwriter who is regarded as the Bob Marley of Haiti.

- Lenny Bruce (1989/12/13/0308717) Many of his Israeli songs are collaborations with Jonathan Geffen, an journalist and writer whom he described ‘‘as the Lenny Bruce of our time there.’’

- Spike Jones (1990/08/29/0380281) In ‘‘Don Henley Must Die,’’ one of the year’s funniest pop songs, Mojo Nixon, a performer who might be described as the Spike Jones of rock-and-roll, demands the electric chair for the former Eagle as punishment for his being ‘‘pretentious’’ and ‘‘whining like a wounded beagle.’’

- Nelson Riddle (1990/11/26/0404159) “Buried in Blue,” which ends the second act, is one of several numbers in the show in which the band is joined by strings, arranged and conducted by Marc Shaiman, the gifted young arranger and composer who is becoming the Nelson Riddle of his generation.

- Stephen Sondheim (1991/02/06/0420740) In the elegant precision and savage acuity of lyrics for songs like “Blizzard of Lies,” “The Wheelers and the Dealers,” “My Attorney Bernie,” “Can’t Take You Nowhere” and “I’m Hip,” to name several of the roughly 100 songs he’s written, Mr. Frishberg might be described as the Stephen Sondheim of jazz songwriting.

- Neil Simon (1991/05/28/0448667) A pioneer of the Off Off Broadway experimental theater movement in the 1960’s, Mr. Eyen was called the Neil Simon of Off Off Broadway at one point when he had four plays running simultaneously.

- Charles Bronson (1992/02/29/0510431) And even his wife becomes “the Charles Bronson of organic gardening.”

- Bob Dylan (1992/09/11/0555702) Although the 50-year-old Brazilian singer and songwriter has been called the Bob Dylan of Brazil, he is more than that.

- Nelson Riddle (1992/09/11/0555702) They have been lavishly arranged by Ray Santos, the Nelson Riddle of Latin American pop.

- Elvis Presley (1992/09/30/0559861) He is remembered as the “the Elvis Presley of African politics” and called a lion, a giant and a prophet.

- Vanilla Ice (1992/12/27/0579154) – Billy Ray Cyrus could be the Vanilla Ice of country.

- Jimi Hendrix (1993/03/26/0598111) Sugar Blue, who has been called the Jimi Hendrix of the harmonica, has played with everyone from Willie Dixon to the Rolling Stones.

- Pete Seeger (1994/01/07/0660595) Ladino, one of the three major Jewish languages, has produced a rich and extensive repertory of Judeo-Spanish songs, many of which have been collected by Joseph Elias, who is regarded as the Pete Seeger of Ladino music.

- Donald Trump (1994/03/04/0672349) Unbeknownst to Jack until it’s too late, his hostage, Natalie Voss (Kristy Swanson), happens to be the only daughter of a publicity-hungry billionaire (Ray Wise) known as “the Donald Trump of California.”

- Pieter Brueghel the Elder (1994/09/27/0714747) The art critic Robert Hughes calls Mr. Crumb “the Bruegel of the 20th century.”

- James Dean (1996/01/25/0825448) Mr. Cybulski’s performance, full of cynical bravado, established him as the James Dean of Poland.

- Jim Morrison (1996/01/31/0826617) But “Excess and Punishment,” which opens today at the Film Forum, makes no attempt to lionize Schiele as the Jim Morrison of Austrian Expressionists.

- Patrick Swayze (1998/05/22/1018818) If Mr. Fraser continues to take such roles, he could become the 90’s answer to the Patrick Swayze of ‘‘Dirty Dancing.’’

- João Gilberto (2005/03/09/1655600) Rosa Passos, an ardent disciple of João Gilberto, the Brazilian singer, guitarist and bossa nova pioneer, has been called ‘‘the João Gilberto of skirts’’ in her native Brazil.

- James Stewart (2006/11/11/1803780) Thus spoke this singer-songwriter, who might be described as the Jimmy Stewart of folk rock, in his first Manhattan concert in five years.

Janet Maslin

# extract list of articles

for article in $(../org.py --ignore-source-ids fictional_humans_in_our_data_set.tsv -T -f ../README.org \

| join ../nyt_authors.tsv - | grep "Maslin, Janet" | cut -d' ' -f1 ); do

grep "$article" ../README.org

done

- Bob Hope (1993/04/23/0604282) is loaded with rap-related cameos that work only if you recognize the players (Fab 5 Freddy, Kid Capri, Naughty by Nature and the Bob Hope of rap cinema, Ice-T), and have little intrinsic humor of their own.

- Sandy Dennis (1993/09/03/0632371) (Ms. Lewis, who has many similar mannerisms, may be fast becoming the Sandy Dennis of her generation.)

- Adolf Hitler (1994/02/04/0666537) The terrors of the code, as overseen by Joseph Breen (who was nicknamed “the Hitler of Hollywood” in some quarters), went beyond the letter of the document and brought about a more generalized moral purge.

- Hulk Hogan (1994/10/25/0720551) Libby’s cousin Andrew, an art director who’s “so incredibly creative that, as my mother says, no one’s holding their breath for grandchildren,” opines that “David Mamet is the Hulk Hogan of the American theater and that his word processor should be tested for steroids.”

- Andrew Dice Clay (1995/09/22/0790066) Mr. Ezsterhas, the Andrew Dice Clay of screenwriting, bludgeons the audience with such tirelessly crude thoughts that when a group of chimps get loose in the showgirls’ dressing room and all they do is defecate, the film enjoys a rare moment of good taste.

- Thomas Jefferson (1996/01/24/0825044) Last year’s overnight sensation, Edward Burns of “The Brothers McMullen,” came out of nowhere and now has Jennifer Aniston acting in his new film and Robert Redford, the Thomas Jefferson of Sundance, helping as a creative consultant.

- Elliott Gould (1996/03/08/0835139) All coy grins and daffy mugging, Mr. Stiller plays the role as if aspiring to become the Elliott Gould of his generation.

- Charlie Parker (1996/08/09/0870295) But for all its admiration, ‘‘Basquiat’’ winds up no closer to that assessment than to the critic Robert Hughes’s more jaundiced one: ‘‘Far from being the Charlie Parker of SoHo (as his promoters claimed), he became its Jessica Savitch.’’

- Aesop (1996/08/09/0870300) Eric Rohmer’s ‘‘Rendezvous in Paris’’ is an oasis of contemplative intelligence in the summer movie season, presenting three graceful and elegant parables with the moral agility that distinguishes Mr. Rohmer as the Aesop of amour.

- Diana Vreeland (1997/06/06/0934955) The complex aural and visual style of ‘‘The Pillow Book’’ involves rectangular insets that flash back to Sei Shonagon (a kind of Windows 995) and illustrate the imperious little lists that made her sound like the Diana Vreeland of 10th-century tastes.

- Thomas Edison (1997/09/19/0958685) Danny DeVito embodies this as a gleeful Sid Hudgens (a character whom Mr. Hanson has called ‘‘the Thomas Edison of tabloid journalism’’), who is the unscrupulous editor of a publication called Hush-Hush and winds up linked to many of the other characters’ nastiest transgressions.

- John Wayne (1997/09/26/0960422) Mr. Hopkins, whose creative collaboration with Bart goes back to ‘‘Legends of the Fall,’’ has called him ‘‘the John Wayne of bears.’’

- Annie Oakley (1997/12/24/0982708) Running nearly as long as ‘‘Pulp Fiction’’ even though its ambitions are more familiar and small, ‘‘Jackie Brown’’ has the makings of another, chattier ‘‘Get Shorty’’ with an added homage to Pam Grier, the Annie Oakley of 1970’s blaxploitation.

- Buster Keaton (1998/09/18/1047276) Fortunately, being the Buster Keaton of martial arts, he makes a doleful expression and comedic physical grace take the place of small talk.

- Michelangelo (1998/09/25/1049076) She goes to a plastic surgeon (Michael Lerner) who’s been dubbed ‘‘the Michelangelo of Manhattan’’ by Newsweek.

- Brian Wilson (1998/12/31/1073562) The enrapturing beauty and peculiar naivete of ‘‘The Thin Red Line’’ heightened the impression of Terrence Malick as the Brian Wilson of the film world.

- Dante Alighieri (1999/10/22/1147181) Though his latest film explores one more urban inferno and colorfully reaffirms Mr. Scorsese’s role as the Dante of the Cinema, creating its air of nocturnal torment took some doing.

- Albert Einstein (2000/12/07/1253134) In this much coarser and more violent, action-heavy story, Mr. Deaver presents the villainous Dr. Aaron Matthews, whom a newspaper once called ‘‘the Einstein of therapists’’ in the days before Hannibal Lecter became his main career influence.

- Émile Zola (2001/03/09/1276449) George P. Pelecanos arrives with the best possible recommendations from other crime writers (e.g., Elmore Leonard likes him), and with jacket copy praising him as ‘‘the Zola of Washington, D.C.’’ But what he really displays here, in great abundance and to entertaining effect, is a Tarantino touch.

- Leonard Cohen (2002/08/22/1417676) The wry, sexy melancholy of his observations would be seductive enough in its own right – he is the Leonard Cohen of the spy genre – even without the sharp political acuity that accompanies it.

- Kato Kaelin (2003/04/07/1478881) Then he has settled in – as ‘‘a permanent house guest, the Kato Kaelin of the wine country,’’ in the case of Alan Deutschman – and tried to figure out what it all means.

- Hulk Hogan (2003/04/14/1480850) Meanwhile, at 5 feet 10 tall and 115 pounds, Andy is the Hulk Hogan of this food-phobic crowd.

- Nora Roberts (2003/04/17/1481531) For those who write like clockwork (i.e., Stuart Woods, the Nora Roberts of mystery best-sellerdom), a new book every few months is no surprise.

- Henny Youngman (2004/03/05/1563840) Together Mr. Yetnikoff and Mr. Ritz devise a kind of sitcom snappiness that turns Mr. Yetnikoff into the Henny Youngman of CBS.

- Frank Stallone (2004/09/20/1612886) He can read the biblical story of Aaron and imagine ‘‘the Frank Stallone of ancient Judaism.’’

- Marlon Brando (2005/11/08/1715899) He named his daughter Tuesday, after the actress Tuesday Weld, whom Sam Shepard once called ‘‘the Marlon Brando of women.’’

- Jesse James (2005/12/09/1723424) How else to explain ‘‘Comma Sense,’’ which has a blurb from Ms. Truss and claims that the apostrophe is the Jesse James of punctuation marks?

- Elton John (2006/12/11/1811150) Though Foujita had a fashion sense that made him look like the Elton John of Montparnasse (he favored earrings, bangs and show-stopping homemade costumes), and though he is seen here hand in hand with a male Japanese friend during their shared tunic-wearing phase, he is viewed by Ms. Birnbaum strictly as a lady-killer.

- Ernest Hemingway (2007/04/30/1844006) Mr. Browne also points out that when he introduced Mr. Zevon to an audience as ‘‘the Ernest Hemingway of the twelve-string guitar,’’ Mr. Zevon said he was more like Charles Bronson.

Relative Frequency

The previous table shows the most prolific authors in terms of the absolute number of VA used. Naturally, authors who wrote more articles had more chances to throw in VA expressions, so let’s also compare the relative number of VA used. We compute how many articles per author we need on average to encounter one VA. The smaller this number, the more often the author uses VA in their articles. So, ‘18’ would mean that on average a VA occurs in every 18th article. We will use a threshold of at least 1000 articles to filter authors who only occasionally wrote for the NYT.

../org.py --ignore-source-ids fictional_humans_in_our_data_set.tsv -T -f ../README.org \

| join ../nyt_authors.tsv - | sed "s/ /\t/" | awk -F'\t' '{print $2}' \

| sort | uniq -c \

| sed -e "s/^ *//" -e "s/ /\t/" | awk -F'\t' '{print $2"\t"$1}' \

| join -t$'\t' -o1.2,2.2,1.1 - ../nyt_authors_distrib.tsv \

| awk -F$'\t' '{if ($2 >= 1000) printf "%3.1f\t%i\t%i\t%s\n", $2/$1, $1, $2, $3}' \

| LC_NUMERIC=en_US.UTF-8 sort -n | head -n20

| articles per VA | VA | articles | author |

|---|---|---|---|

| 84.2 | 18 | 1515 | Kimmelman, Michael |

| 86.7 | 19 | 1647 | Dowd, Maureen |

| 89.7 | 19 | 1704 | Berkow, Ira |

| 91.2 | 15 | 1368 | Grimes, William |

| 99.1 | 29 | 2874 | Maslin, Janet |

| 102.6 | 14 | 1437 | Stanley, Alessandra |

| 105.3 | 26 | 2739 | Vecsey, George |

| 111.4 | 11 | 1225 | Strauss, Neil |

| 112.6 | 10 | 1126 | Scott, A O |

| 112.9 | 10 | 1129 | Rich, Frank |

| 113.0 | 12 | 1356 | Apple, R W Jr |

| 132.5 | 12 | 1590 | Longman, Jere |

| 133.1 | 20 | 2661 | Kisselgoff, Anna |

| 136.5 | 23 | 3140 | Sandomir, Richard |

| 138.6 | 14 | 1940 | Araton, Harvey |

| 139.5 | 13 | 1814 | Martin, Douglas |

| 139.9 | 10 | 1399 | Verhovek, Sam Howe |

| 145.9 | 15 | 2188 | Barron, James |

| 146.0 | 8 | 1168 | Gates, Anita |

| 154.6 | 9 | 1391 | Collins, Glenn |

Modifiers

../org.py --ignore-source-ids fictional_humans_in_our_data_set.tsv -o -T ../README.org | sort | uniq -c | sort -nr | head -n30

| count | modifier |

|---|---|

| 55 | his day |

| 33 | his time |

| 29 | Japan |

| 16 | tennis |

| 16 | his generation |

| 16 | baseball |

| 15 | China |

| 13 | her time |

| 13 | her day |

| 12 | our time |

| 11 | the 1990’s |

| 10 | the Zulus |

| 10 | the 90’s |

| 10 | politics |

| 10 | hockey |

| 10 | Brazil |

| 10 | basketball |

| 10 | ballet |

| 9 | jazz |

| 9 | fashion |

| 8 | today |

| 8 | Israel |

| 8 | his era |

| 8 | hip-hop |

| 8 | golf |

| 8 | dance |

Time

“Today”

Who are the sources for the modifier “… of today”?

../org.py --ignore-source-ids fictional_humans_in_our_data_set.tsv -w -T -t -c ../README.org \

| grep "of\* /today/" | awk -F'\t' '{print $2}' | sort | uniq -c | sort -nr

| count | source |

|---|---|

| 1 | Shoeless Joe Jackson |

| 1 | Buck Rogers |

| 1 | Bill McGowan |

| 1 | William F. Buckley Jr. |

| 1 | Ralph Fiennes |

| 1 | Julie London |

| 1 | Jimmy Osmond |

| 1 | Harry Cohn |

“His Day” or “His Time”

Who are the sources for the modifiers “… of his day”, “… of his time”, and “… of his generation”?

../org.py --ignore-source-ids fictional_humans_in_our_data_set.tsv -w -T -t -c ../README.org \

| grep "of\* /his \(day\|time\|generation\)/" | awk -F'\t' '{print $2}' | sort | uniq -c | sort -nr | head

| count | source |

|---|---|

| 3 | Michael Jordan |

| 2 | Mike Tyson |

| 2 | Billy Martin |

| 2 | Dan Quayle |

| 2 | Arnold Schwarzenegger |

| 2 | Martha Stewart |

| 2 | Donald Trump |

| 2 | L. Ron Hubbard |

| 2 | Tiger Woods |

| 1 | Lawrence Taylor |

“Her Day”

Who are the sources for the modifier “… of her day”?

../org.py --ignore-source-ids fictional_humans_in_our_data_set.tsv -w -T -t -c ../README.org \

| grep "of\* /her day/" | awk -F'\t' '{print $2}' | sort | uniq -c | sort -nr

| count | source |

|---|---|

| 1 | Hilary Swank |

| 1 | Hillary Clinton |

| 1 | Marilyn Monroe |

| 1 | Judith Krantz |

| 1 | Lucia Pamela |

| 1 | Elizabeth Taylor |

| 1 | Imelda Marcos |

| 1 | Laurie Anderson |

| 1 | Nell Gwyn |

| 1 | Annie Leibovitz |

| 1 | Tara Reid |

| 1 | Madonna |

| 1 | Maria Callas |

Country

../org.py --ignore-source-ids fictional_humans_in_our_data_set.tsv -o -T ../README.org \

| sort | uniq -c | sort -nr | grep "Japan\|China\|Brazil\|Iran\|Israel\|Mexico\|India\|South Africa\|Spain\|South Korea\|Russia\|Poland\|Pakistan" | head -n13

| count | country |

|---|---|

| 29 | Japan |

| 15 | China |

| 10 | Brazil |

| 8 | Israel |

| 7 | Iran |

| 7 | India |

| 4 | South Africa |

| 4 | Mexico |

| 3 | Spain |

| 3 | South Korea |

| 3 | Russia |

| 3 | Poland |

| 3 | Pakistan |

What are the sources for the modifier … ?

“Japan”

../org.py --ignore-source-ids fictional_humans_in_our_data_set.tsv -w -T -t -c ../README.org \

| grep "of\* /Japan/" | awk -F'\t' '{print $2}' | sort | uniq -c | sort -nr

| count | source |

|---|---|

| 5 | Walt Disney |

| 4 | Bill Gates |

| 2 | Nolan Ryan |

| 2 | Frank Sinatra |

| 1 | Richard Perle |

| 1 | Thomas Edison |

| 1 | Cal Ripken |

| 1 | Walter Johnson |

| 1 | Andy Warhol |

| 1 | Pablo Picasso |

| 1 | William Wyler |

| 1 | Stephen King |

| 1 | Brad Pitt |

| 1 | Richard Avedon |

| 1 | P. D. James |

| 1 | Rem Koolhaas |

| 1 | Steve Jobs |

| 1 | Ralph Nader |

| 1 | Madonna |

| 1 | Jack Kerouac |

“China”

../org.py --ignore-source-ids fictional_humans_in_our_data_set.tsv -w -T -t -c ../README.org \

| grep "of\* /China/" | awk -F'\t' '{print $2}' | sort | uniq -c | sort -nr

| count | source |

|---|---|

| 4 | Barbara Walters |

| 2 | Jack Welch |

| 1 | Louis XIV of France |

| 1 | Oskar Schindler |

| 1 | Napoleon |

| 1 | Keith Haring |

| 1 | Mikhail Gorbachev |

| 1 | Donald Trump |

| 1 | Larry King |

| 1 | Ted Turner |

| 1 | Madonna |

“Brazil”

../org.py --ignore-source-ids fictional_humans_in_our_data_set.tsv -w -T -t -c ../README.org \

| grep "of\* /Brazil/" | awk -F'\t' '{print $2}' | sort | uniq -c | sort -nr

| count | source |

|---|---|

| 1 | Giuseppe Verdi |

| 1 | Jil Sander |

| 1 | Walter Reed |

| 1 | Lech Wałęsa |

| 1 | Jim Morrison |

| 1 | Bob Dylan |

| 1 | Elvis Presley |

| 1 | Scott Joplin |

| 1 | Larry Bird |

| 1 | Pablo Escobar |

Sports

../org.py --ignore-source-ids fictional_humans_in_our_data_set.tsv -o -T ../README.org \

| sort | uniq -c | sort -nr | grep "baseball\|basketball\|tennis\|golf\|football\|racing\|soccer\|sailing" | head -n7

| count | sports |

|---|---|

| 16 | tennis |

| 16 | baseball |

| 10 | basketball |

| 8 | golf |

| 7 | football |

| 6 | soccer |

| 6 | racing |

Who are the sources for the modifier … ?

“Tennis”

../org.py --ignore-source-ids fictional_humans_in_our_data_set.tsv -w -T -t -c ../README.org \

| grep "of\* /tennis/" | awk -F'\t' '{print $2}' | sort | uniq -c | sort -nr

| count | source |

|---|---|

| 2 | George Foreman |

| 1 | Tim McCarver |

| 1 | Pete Rose |

| 1 | Nolan Ryan |

| 1 | Crash Davis |

| 1 | Spike Lee |

| 1 | John Madden |

| 1 | Michael Jordan |

| 1 | John Wayne |

| 1 | George Hamilton |

| 1 | Michael Dukakis |

| 1 | Jackie Robinson |

| 1 | Babe Ruth |

| 1 | Dennis Rodman |

| 1 | Madonna |

“Baseball”

../org.py --ignore-source-ids fictional_humans_in_our_data_set.tsv -w -T -t -c ../README.org \

| grep "of\* /baseball/" | awk -F'\t' '{print $2}' | sort | uniq -c | sort -nr

| count | source |

|---|---|

| 2 | P. T. Barnum |

| 2 | Larry Bird |

| 1 | Clifford Irving |

| 1 | Mike Tyson |

| 1 | Thomas Dooley |

| 1 | Marco Polo |

| 1 | Pablo Picasso |

| 1 | Horatio Alger |

| 1 | Rodney Dangerfield |

| 1 | Michael Jordan |

| 1 | Alan Alda |

| 1 | Brandon Tartikoff |

| 1 | Howard Hughes |

| 1 | Thomas Jefferson |

“Basketball”

../org.py --ignore-source-ids fictional_humans_in_our_data_set.tsv -w -T -t -c ../README.org \

| grep "of\* /basketball/" | awk -F'\t' '{print $2}' | sort | uniq -c | sort -nr

| count | source |

|---|---|

| 2 | Babe Ruth |

| 1 | Joseph Stalin |

| 1 | Martin Luther King, Jr. |

| 1 | Pol Pot |

| 1 | Johnny Appleseed |

| 1 | Adolf Hitler |

| 1 | Bugsy Siegel |

| 1 | Elvis Presley |

| 1 | Chuck Yeager |

“Golf”

../org.py --ignore-source-ids fictional_humans_in_our_data_set.tsv -w -T -t -c ../README.org \

| grep "of\* /golf/" | awk -F'\t' '{print $2}' | sort | uniq -c | sort -nr

| count | source |

|---|---|

| 2 | Michael Jordan |

| 2 | Jackie Robinson |

| 1 | J. D. Salinger |

| 1 | James Brown |

| 1 | Marlon Brando |

| 1 | Babe Ruth |

“Football”

../org.py --ignore-source-ids fictional_humans_in_our_data_set.tsv -w -T -t -c ../README.org \

| grep "of\* /football/" | awk -F'\t' '{print $2}' | sort | uniq -c | sort -nr

| count | source |

|---|---|

| 1 | Ann Calvello |

| 1 | Bobby Fischer |

| 1 | Patrick Henry |

| 1 | Susan Lucci |

| 1 | Jackie Robinson |

| 1 | Babe Ruth |

| 1 | Rich Little |

“Soccer”

../org.py --ignore-source-ids fictional_humans_in_our_data_set.tsv -w -T -t -c ../README.org \

| grep "of\* /soccer/" | awk -F'\t' '{print $2}' | sort | uniq -c | sort -nr

| count | source |

|---|---|

| 1 | James Brown |

| 1 | Michael Jordan |

| 1 | Larry Brown |

| 1 | Derek Jeter |

| 1 | Ernie Banks |

| 1 | Magic Johnson |

“Racing”

../org.py --ignore-source-ids fictional_humans_in_our_data_set.tsv -w -T -t -c ../README.org \

| grep "of\* /racing/" | awk -F'\t' '{print $2}' | sort | uniq -c | sort -nr

| count | source |

|---|---|

| 2 | Rodney Dangerfield |

| 1 | John Madden |

| 1 | Bobo Holloman |

| 1 | Lou Gehrig |

| 1 | Wayne Gretzky |

Culture

../org.py --ignore-source-ids fictional_humans_in_our_data_set.tsv -T -o ../README.org \

| sort | uniq -c | sort -nr | grep "dance\|hip-hop\|jazz\|fashion\|weaving\|ballet\|the art world\|wine\|salsa" | head -n8

| count | modifier |

|---|---|

| 10 | ballet |

| 9 | jazz |

| 9 | fashion |

| 8 | hip-hop |

| 8 | dance |

| 7 | the art world |

| 4 | wine |

| 4 | salsa |

Michael Jordan

../org.py -T -l -o ../README.org | awk -F'\t' '{if ($1 == "Michael Jordan") print $2}' \

| sort -u

the Michael Jordan of

- …

- 12th men

- actresses

- Afghanistan

- Australia

- baseball

- BMX racing

- boxing

- Brazilian basketball for the past 20 years

- college coaches

- computer games

- cricket

- cyberspace

- dance

- diving

- dressage horses

- fast food

- figure skating

- foosball

- game shows

- geopolitics

- golf

- Harlem

- her time

- his day

- his sport

- his team

- his time

- hockey

- horse racing

- hunting and fishing

- Indiana

- integrating insurance and health care

- julienne

- jumpers

- language

- Laser sailing

- late-night TV

- management in Digital

- Mexico

- motocross racing in the 1980’s

- orange juice

- recording

- Sauternes

- snowboarding

- soccer

- television puppets

- tennis

- the Buffalo team

- the dirt set

- the Eagles

- the game

- the Hudson

- the National Football League

- the South Korean penal system

- the sport

- the White Sox

- this sport

- women’s ball

- women’s basketball

Some Favourites

- Marquis de Sade (1993/09/26/0636952) When we introduced Word in October 1983, in its first incarnation it was dubbed the Marquis de Sade of word processors, which was not altogether unfair.

- Groucho Marx (1987/09/27/0077726) But the tide eventually shifted, partly because the supreme materialist of physics, Richard Feynman of the California Institute of Technology, a man once described as the Groucho Marx of physics, turned the quest for nuclear substructure into a cause celebre.

Complete List of Successfully Extracted VA

../org.py --ignore-source-ids fictional_humans_in_our_data_set.tsv -g -H -T ../README.org \

| pandoc -f org -t markdown -o vossantos.md

result in vossantos.md